The drawback to this technique is that we are only exploring the data that the algorithm is deciding is relevant or important. The lower the Point Budget, the more these algorithmic decisions affect the final output. There are ways around this, for example, by using a larger point size to blend points into surfaces. However that is essentially synthetic and not “real” data.

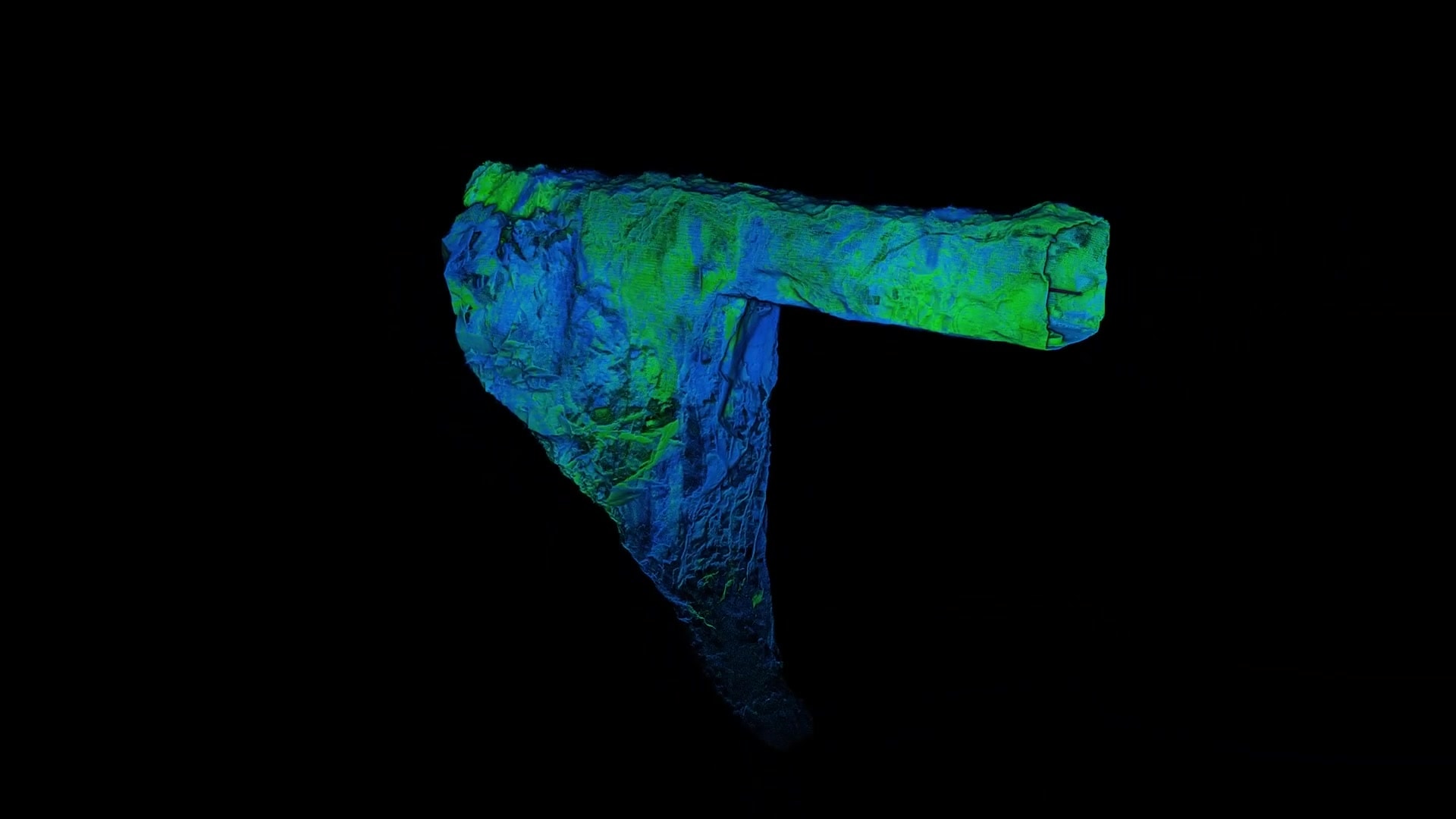

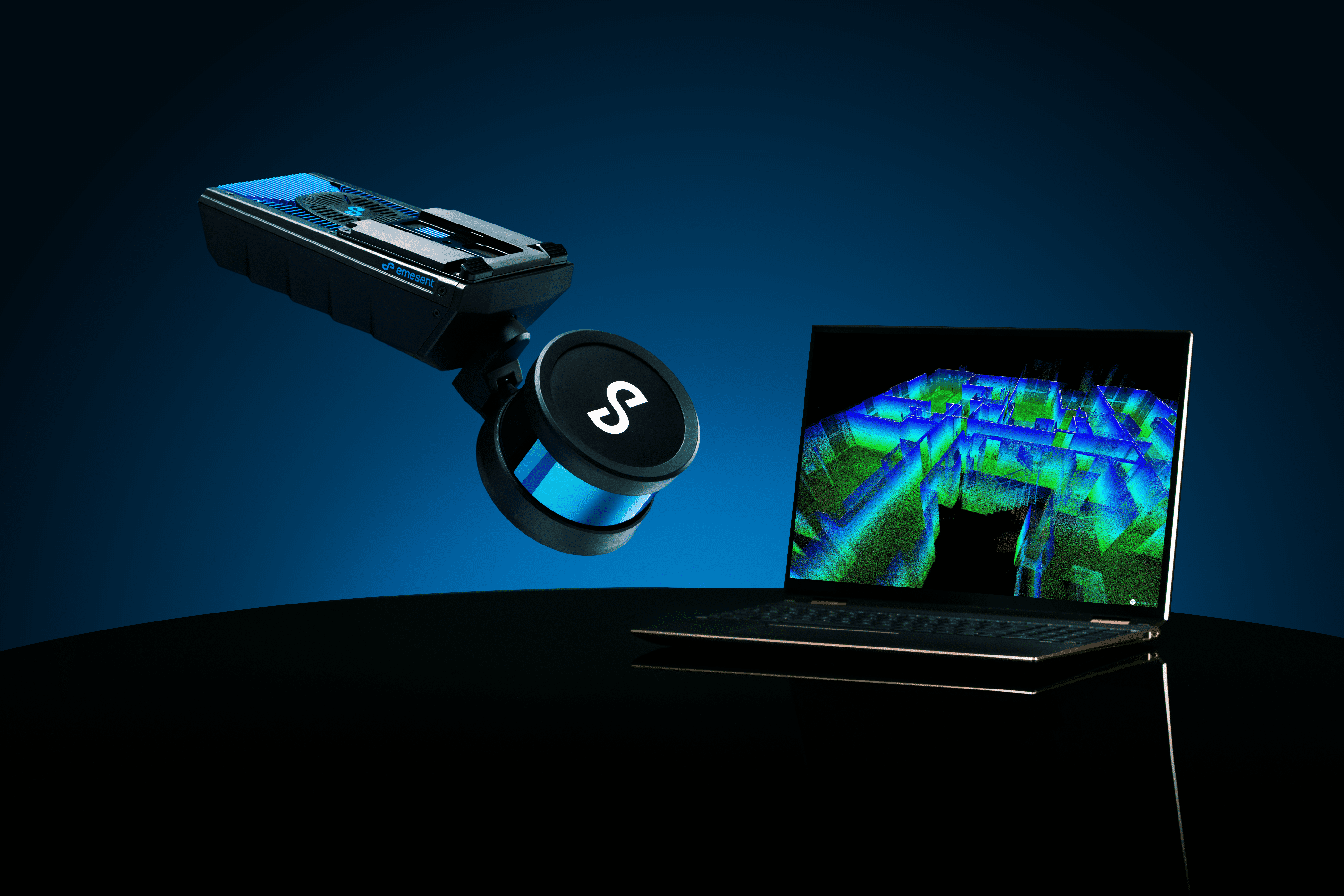

This isn’t necessary for Hovermap data, as it produces very dense point clouds, and the detail is there without needing to be synthesized. So, since starting the project, we have been extending the plugin to cater for our dense data.

One of our early extensions was to add support for the point attributes that are produced by our SLAM processing software. We included support for displaying intensity, time, ring number, range, and true color attributes.

Another was to maximize our single frame render budget to 100 million points – well beyond what came out of the box from Unreal.